Last updated at Fri, 03 Nov 2017 20:06:52 GMT

What is Acceptance Testing?

"Acceptance testing is a test conducted to determine if the requirements of a specification or contract are met.” (Wikipedia definition) In simple words, Acceptance tests check if the software that we have built matches the requirements that were provided.

The Magical Black Box

Acceptance testing is usually performed using “black box” testing method.

The tester of the system knows nothing about any internal implementation of the system. Their only concern is with the functionality of that system – if it matches the given requirements.

In Black Box testing, the system is provided with a series of inputs, and the outputs are checked against the specification. The test is said to be passed, if the outputs match the requirement. Internal implementation can change at any time and this must not affect the validity of the tests.

How to Pick Good Test Data

Your tests are only as good as the data that you use in them. It is important to come up with a data set that will trigger all possible paths through your system, but without knowing any implementation specifics and relying only on provided system requirements.

Two methods can be employed to identify the most suitable values to be used in testing:

- Equivalence Partitioning

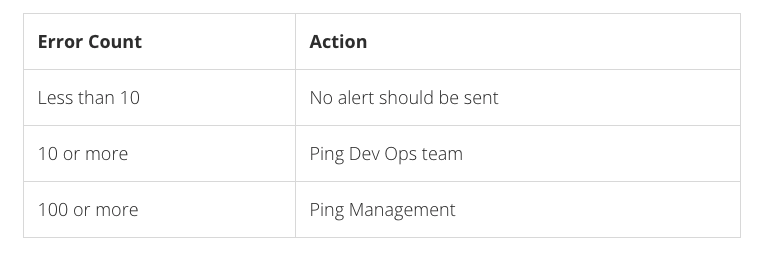

Equivalence Partitioning involves separating all possible input parameters (can be an infinite set) into a finite set of partitions and using one value from each partition, with the assumption that every value in the given partition will produce an equivalent effect (but not necessarily the same).For instance, if we have a simple system that alerts dev ops if the error count in their logs exceed 10 occurrences per day, and if the error count goes above 100, then an email to the management will also be triggered.

The following partition table can be drawn:

- Boundary Analysis

Boundary analysis involves testing values that are on the edge of every partition. That is, the value right on the boundary, one above it and also one below it. From example above, 9, 10,11, 99, 100, 101 are boundary values.

It is useful to verify them, in case a developer mixed up a “great than” comparison operator with “greater than or equals.” Boundary analysis ensures that boundaries correctly implemented and remain that way.

Acceptance Tests In Practice – Behavior Driven Development

At Logentries, we use Behavior Driven Development (BDD) for acceptance testing. BDD is branch of Test Driven Development (TDD) – a methodology that advocates that tests should be written first, describing desired functionality. Then the actual functionality should be implemented to match the requirements under testing.

Therefore, tests drive the development, and we always go from red (a failure, since a test for the required functionality already exists, but the functionality is not yet implemented) to green (where the test passes, once the functionality is implemented).

BDD is particularly useful for Acceptance Tests, since User Stories are at the heart of BDD methodology. It advocates that a developer first writes a User Story. This User Story is used both for documenting the feature and triggering the acceptance tests.

The Logentries Engineering team uses Python Cucumber and Gherkin to write and execute our User Stories. Gherkin a Business Readable, Domain Specific language. It can be used to clearly describe user stories which are separated into multiple scenarios. Each scenario is a separate user story. Each line in a scenario is a requirement for that user story and is then mapped onto a Python function which executes the actual test.

Therefore, we achieve two goals at once:

- We end up with a well documented system.

- We use this documentation to drive our tests.

Acceptance Tests, BDD and GUIs

BDD can be used to test the functional aspect of browser-based user interfaces. For instance, at Logentries we use Selenium Browser Automation Tool with Python Bindings to drive our UI Acceptance Tests. Each User Story is executed against the bleeding edge version right inside the browser, and Python code instructs the browser to perform actions such as clicks, and then checks for expected result (Success messages, validation errors and so on).

It’s like watching a really fast user clicking through your UI.

Mocks & Third Party APIs

Sometimes you want to test a part of your system that interacts with a third party API and output of the your system depends on the output of that third party API. Since you have no control over the third party API, you cannot test your own system, since the output might differ depending on many factors, like date, time, passed in parameters, etc. In that case you can mock the third party API to get predictable results.

Consider the following example: You have a checkout page where your user is presented with the final price. The user can select to pay in their own currency. Based on the selected currency, your system performs a currency conversion, and the conversion rate is obtained from your bank’s API. How can you test that the conversion works, since you don’t know the conversion rate at the time when the tests are running?

In this case, you can mock the API. For instance, you can modify your /etc/hosts file temporarily to point all requests that go to your bank and redirect them to your mock which always returns the same currency conversion rate. In this case, you have predictable data and you can test that €3 would always be converted to $4 (or whatever it might be).

Conclusion

Acceptance tests are crucial to verifying that the final product matches the provided requirements. By utilizing Behavior Driven Development in Acceptance Tests, we ensure that each shipper feature is always clearly documented and the this documentation is used to drive functional tests.